参考《附003.Kubeadm部署Kubernetes》。

参考《附003.Kubeadm部署Kubernetes》。

· 本方案采用kubeadm部署Kubernetes 1.18.3版本;

· etcd采用混部方式;

· KeepAlived:实现VIP高可用;

· HAProxy:以系统systemd形式运行,提供反向代理至3个master 6443端口;

· 其他主要部署组件包括:

· Metrics:度量;

· Dashboard:Kubernetes 图形UI界面;

· Helm:Kubernetes Helm包管理工具;

· Ingress:Kubernetes 服务暴露;

· Longhorn:Kubernetes 动态存储组件。

回到顶部

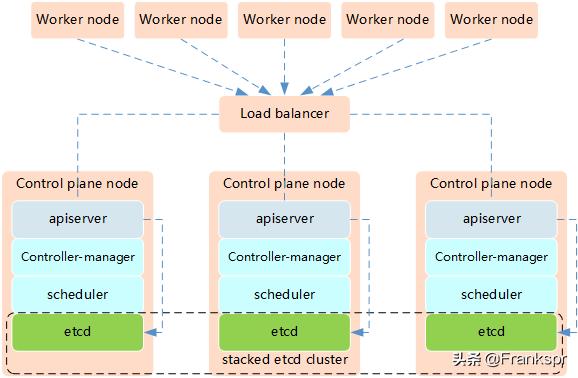

Kubernetes的高可用主要指的是控制平面的高可用,即指多套Master节点组件和Etcd组件,工作节点通过负载均衡连接到各Master。

Kubernetes高可用架构中etcd与Master节点组件混布方式特点:

1. 所需机器资源少

2. 部署简单,利于管理

3. 容易进行横向扩展

4. 风险大,一台宿主机挂了,master和etcd就都少了一套,集群冗余度受到的影响比较大。

提示:本实验使用Keepalived+HAProxy架构实现Kubernetes的高可用。

[root@master01 ~]# hostnamectl set-hostname master01 #其他节点依次修改

[root@master01 ~]# cat >> /etc/hosts << EOF

172.24.8.71 master01

172.24.8.72 master02

172.24.8.73 master03

172.24.8.74 worker01

172.24.8.75 worker02

172.24.8.76 worker03

EOF

[root@master01 ~]# vi k8sinit.sh

# Initialize the machine. This needs to be executed on every machine.

# Install Docker

useradd -m docker

yum -y install yum-utils device-mApper-persistent-data lvm2

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://dbzucv6w.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

]

}

EOF

systemctl restart docker

systemctl enable docker

systemctl status docker

# Disable the SELinux.

sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

# Turn off and disable the firewalld.

systemctl stop firewalld

systemctl disable firewalld

# Modify related kernel parameters & Disable the swap.

cat > /etc/sysctl.d/k8s.conf << EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.tcp_tw_recycle = 0

vm.swappiness = 0

vm.overcommit_memory = 1

vm.panic_on_oom = 0

net.ipv6.conf.all.disable_ipv6 = 1

EOF

sysctl -p /etc/sysctl.d/k8s.conf >&/dev/null

swapoff -a

sed -i '/ swap / s/^(.*)$/#1/g' /etc/fstab

modprobe br_netfilter

# Add ipvs modules

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

modprobe -- nf_conntrack

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules

bash /etc/sysconfig/modules/ipvs.modules

# Install rpm

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget

# Update kernel

rpm --import http://down.linuxsb.com:8888/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://down.linuxsb.com:8888/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

yum --disablerepo="*" --enablerepo="elrepo-kernel" install -y kernel-ml

sed -i 's/^GRUB_DEFAULT=.*/GRUB_DEFAULT=0/' /etc/default/grub

grub2-mkconfig -o /boot/grub2/grub.cfg

yum update -y

# Reboot the machine.

# reboot

提示:对于某些特性,可能需要升级内核,内核升级操作见《018.Linux升级内核》。4.19版及以上内核nf_conntrack_ipv4已经改为nf_conntrack。

为了更方便远程分发文件和执行命令,本实验配置master01节点到其它节点的 ssh 信任关系。

[root@master01 ~]# ssh-keygen -f ~/.ssh/id_rsa -N ''

[root@master01 ~]# ssh-copy-id -i ~/.ssh/id_rsa.pub root@master01

[root@master01 ~]# ssh-copy-id -i ~/.ssh/id_rsa.pub root@master02

[root@master01 ~]# ssh-copy-id -i ~/.ssh/id_rsa.pub root@master03

[root@master01 ~]# ssh-copy-id -i ~/.ssh/id_rsa.pub root@worker01

[root@master01 ~]# ssh-copy-id -i ~/.ssh/id_rsa.pub root@worker02

[root@master01 ~]# ssh-copy-id -i ~/.ssh/id_rsa.pub root@worker03

提示:此操作仅需要在master节点操作。

[root@master01 ~]# vi environment.sh

#!/bin/sh

#****************************************************************#

# ScriptName: environment.sh

# Author: xhy

# Create Date: 2020-05-30 16:30

# Modify Author: xhy

# Modify Date: 2020-06-15 17:55

# Version:

#***************************************************************#

# 集群 MASTER 机器 IP 数组

export MASTER_IPS=(172.24.8.71 172.24.8.72 172.24.8.73)

# 集群 MASTER IP 对应的主机名数组

export MASTER_NAMES=(master01 master02 master03)

# 集群 NODE 机器 IP 数组

export NODE_IPS=(172.24.8.74 172.24.8.75 172.24.8.76)

# 集群 NODE IP 对应的主机名数组

export NODE_NAMES=(worker01 worker02 worker03)

# 集群所有机器 IP 数组

export ALL_IPS=(172.24.8.71 172.24.8.72 172.24.8.73 172.24.8.74 172.24.8.75 172.24.8.76)

# 集群所有IP 对应的主机名数组

export ALL_NAMES=(master01 master02 master03 worker01 worker02 worker03)

[root@master01 ~]# source environment.sh

[root@master01 ~]# chmod +x *.sh

[root@master01 ~]# for all_ip in ${ALL_IPS[@]}

do

echo ">>> ${all_ip}"

scp -rp /etc/hosts root@${all_ip}:/etc/hosts

scp -rp k8sinit.sh root@${all_ip}:/root/

ssh root@${all_ip} "bash /root/k8sinit.sh"

done

回到顶部

需要在每台机器上都安装以下的软件包:

· kubeadm: 用来初始化集群的指令;

· kubelet: 在集群中的每个节点上用来启动 pod 和 container 等;

· kubectl: 用来与集群通信的命令行工具。

kubeadm不能安装或管理 kubelet 或 kubectl ,所以得保证他们满足通过 kubeadm 安装的 Kubernetes 控制层对版本的要求。如果版本没有满足要求,可能导致一些意外错误或问题。具体相关组件安装见。提示:Kubernetes 1.18版本所有兼容相应组件的版本参考:。

[root@master01 ~]# for all_ip in ${ALL_IPS[@]}

do

echo ">>> ${all_ip}"

ssh root@${all_ip} "cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF"

ssh root@${all_ip} "yum install -y kubeadm-1.18.3-0.x86_64 kubelet-1.18.3-0.x86_64 kubectl-1.18.3-0.x86_64 --disableexcludes=kubernetes"

ssh root@${all_ip} "systemctl enable kubelet"

done

[root@master01 ~]# yum search -y kubelet --showduplicates #查看相应版本

提示:如上仅需Master01节点操作,从而实现所有节点自动化安装,同时此时不需要启动kubelet,初始化的过程中会自动启动的,如果此时启动了会出现报错,忽略即可。说明:同时安装了cri-tools, kubernetes-cni, socat三个依赖:socat:kubelet的依赖;cri-tools:即CRI(Container Runtime Interface)容器运行时接口的命令行工具。

回到顶部

[root@master01 ~]# for master_ip in ${MASTER_IPS[@]}

do

echo ">>> ${master_ip}"

ssh root@${master_ip} "yum -y install gcc gcc-c++ make libnl libnl-devel libnfnetlink-devel openssl-devel wget openssh-clients systemd-devel zlib-devel pcre-devel libnl3-devel"

ssh root@${master_ip} "wget http://down.linuxsb.com:8888/software/haproxy-2.1.6.tar.gz"

ssh root@${master_ip} "tar -zxvf haproxy-2.1.6.tar.gz"

ssh root@${master_ip} "cd haproxy-2.1.6/ && make ARCH=x86_64 TARGET=linux-glibc USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 USE_SYSTEMD=1 PREFIX=/usr/local/haprpxy && make install PREFIX=/usr/local/haproxy"

ssh root@${master_ip} "cp /usr/local/haproxy/sbin/haproxy /usr/sbin/"

ssh root@${master_ip} "useradd -r haproxy && usermod -G haproxy haproxy"

ssh root@${master_ip} "mkdir -p /etc/haproxy && cp -r /root/haproxy-2.1.6/examples/errorfiles/ /usr/local/haproxy/"

done

[root@master01 ~]# for master_ip in ${MASTER_IPS[@]}

do

echo ">>> ${master_ip}"

ssh root@${master_ip} "yum -y install gcc gcc-c++ make libnl libnl-devel libnfnetlink-devel openssl-devel"

ssh root@${master_ip} "wget http://down.linuxsb.com:8888/software/keepalived-2.0.20.tar.gz"

ssh root@${master_ip} "tar -zxvf keepalived-2.0.20.tar.gz"

ssh root@${master_ip} "cd keepalived-2.0.20/ && ./configure --sysconf=/etc --prefix=/usr/local/keepalived && make && make install"

done

提示:如上仅需Master01节点操作,从而实现所有节点自动化安装。

[root@master01 ~]# wget http://down.linuxsb.com:8888/hakek8s.sh #拉取自动部署脚本

[root@master01 ~]# chmod u+x hakek8s.sh

[root@master01 ~]# vi hakek8s.sh

#!/bin/sh

#****************************************************************#

# ScriptName: hakek8s.sh

# Author: xhy

# Create Date: 2020-06-08 20:00

# Modify Author: xhy

# Modify Date: 2020-06-15 18:15

# Version: v2

#***************************************************************#

#######################################

# set variables below to create the config files, all files will create at ./config directory

#######################################

# master keepalived virtual ip address

export K8SHA_VIP=172.24.8.100

# master01 ip address

export K8SHA_IP1=172.24.8.71

# master02 ip address

export K8SHA_IP2=172.24.8.72

# master03 ip address

export K8SHA_IP3=172.24.8.73

# master01 hostname

export K8SHA_HOST1=master01

# master02 hostname

export K8SHA_HOST2=master02

# master03 hostname

export K8SHA_HOST3=master03

# master01 network interface name

export K8SHA_NETINF1=eth0

# master02 network interface name

export K8SHA_NETINF2=eth0

# master03 network interface name

export K8SHA_NETINF3=eth0

# keepalived auth_pass config

export K8SHA_KEEPALIVED_AUTH=412f7dc3bfed32194d1600c483e10ad1d

# kubernetes CIDR pod subnet

export K8SHA_PODCIDR=10.10.0.0

# kubernetes CIDR svc subnet

export K8SHA_SVCCIDR=10.20.0.0

[root@master01 ~]# ./hakek8s.sh解释:如上仅需Master01节点操作。执行hakek8s.sh脚本后会生产如下配置文件清单:

· kubeadm-config.yaml:kubeadm初始化配置文件,位于当前目录

· keepalived:keepalived配置文件,位于各个master节点的/etc/keepalived目录

· haproxy:haproxy的配置文件,位于各个master节点的/etc/haproxy/目录

· calico.yaml:calico网络组件部署文件,位于config/calico/目录

[root@master01 ~]# cat kubeadm-config.yaml #检查集群初始化配置

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

networking:

serviceSubnet: "10.20.0.0/16" #设置svc网段

podSubnet: "10.10.0.0/16" #设置Pod网段

DNSDomain: "cluster.local"

kubernetesVersion: "v1.18.3" #设置安装版本

controlPlaneEndpoint: "172.24.11.254:16443" #设置相关API VIP地址

apiServer:

certSANs:

- master01

- master02

- master03

- 127.0.0.1

- 192.168.2.11

- 192.168.2.12

- 192.168.2.13

- 192.168.2.200

timeoutForControlPlane: 4m0s

certificatesDir: "/etc/kubernetes/pki"

imageRepository: "k8s.gcr.io"

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

提示:如上仅需Master01节点操作,更多config文件参考:。此kubeadm部署初始化配置更多参考:。

[root@master01 ~]# cat /etc/keepalived/keepalived.conf

[root@master01 ~]# cat /etc/keepalived/check_apiserver.sh 确认Keepalived配置

[root@master01 ~]# for master_ip in ${MASTER_IPS[@]}

do

echo ">>> ${master_ip}"

ssh root@${master_ip} "systemctl start haproxy.service && systemctl enable haproxy.service"

ssh root@${master_ip} "systemctl start keepalived.service && systemctl enable keepalived.service"

ssh root@${master_ip} "systemctl status keepalived.service | grep Active"

ssh root@${master_ip} "systemctl status haproxy.service | grep Active"

done

[root@master01 ~]# for all_ip in ${ALL_IPS[@]}

do

echo ">>> ${all_ip}"

ssh root@${all_ip} "ping -c1 172.24.8.100"

done #等待30s执行检查

提示:如上仅需Master01节点操作,从而实现所有节点自动启动服务。

回到顶部

[root@master01 ~]# kubeadm --kubernetes-version=v1.18.3 config images list #列出所需镜像

[root@master01 ~]# cat config/downimage.sh #确认版本

#!/bin/sh

#****************************************************************#

# ScriptName: downimage.sh

# Author: xhy

# Create Date: 2020-05-29 19:55

# Modify Author: xhy

# Modify Date: 2020-06-10 19:15

# Version: v2

#***************************************************************#

KUBE_VERSION=v1.18.3

CALICO_VERSION=v3.14.1

CALICO_URL=calico

KUBE_PAUSE_VERSION=3.2

ETCD_VERSION=3.4.3-0

CORE_DNS_VERSION=1.6.7

GCR_URL=k8s.gcr.io

METRICS_SERVER_VERSION=v0.3.6

INGRESS_VERSION=0.32.0

CSI_PROVISIONER_VERSION=v1.4.0

CSI_NODE_DRIVER_VERSION=v1.2.0

CSI_ATTACHER_VERSION=v2.0.0

CSI_RESIZER_VERSION=v0.3.0

ALIYUN_URL=registry.cn-hangzhou.aliyuncs.com/google_containers

UCLOUD_URL=uhub.service.ucloud.cn/uxhy

QUAY_URL=quay.io

kubeimages=(kube-proxy:${KUBE_VERSION}

kube-scheduler:${KUBE_VERSION}

kube-controller-manager:${KUBE_VERSION}

kube-apiserver:${KUBE_VERSION}

pause:${KUBE_PAUSE_VERSION}

etcd:${ETCD_VERSION}

coredns:${CORE_DNS_VERSION}

metrics-server-amd64:${METRICS_SERVER_VERSION}

)

for kubeimageName in ${kubeimages[@]} ; do

docker pull $UCLOUD_URL/$kubeimageName

docker tag $UCLOUD_URL/$kubeimageName $GCR_URL/$kubeimageName

docker rmi $UCLOUD_URL/$kubeimageName

done

calimages=(cni:${CALICO_VERSION}

pod2daemon-flexvol:${CALICO_VERSION}

node:${CALICO_VERSION}

kube-controllers:${CALICO_VERSION})

for calimageName in ${calimages[@]} ; do

docker pull $UCLOUD_URL/$calimageName

docker tag $UCLOUD_URL/$calimageName $CALICO_URL/$calimageName

docker rmi $UCLOUD_URL/$calimageName

done

ingressimages=(Nginx-ingress-controller:${INGRESS_VERSION})

for ingressimageName in ${ingressimages[@]} ; do

docker pull $UCLOUD_URL/$ingressimageName

docker tag $UCLOUD_URL/$ingressimageName $QUAY_URL/kubernetes-ingress-controller/$ingressimageName

docker rmi $UCLOUD_URL/$ingressimageName

done

csiimages=(csi-provisioner:${CSI_PROVISIONER_VERSION}

csi-node-driver-registrar:${CSI_NODE_DRIVER_VERSION}

csi-attacher:${CSI_ATTACHER_VERSION}

csi-resizer:${CSI_RESIZER_VERSION}

)

for csiimageName in ${csiimages[@]} ; do

docker pull $UCLOUD_URL/$csiimageName

docker tag $UCLOUD_URL/$csiimageName $QUAY_URL/k8scsi/$csiimageName

docker rmi $UCLOUD_URL/$csiimageName

done

[root@master01 ~]# for all_ip in ${ALL_IPS[@]}

do

echo ">>> ${all_ip}"

scp -rp config/downimage.sh root@${all_ip}:/root/

ssh root@${all_ip} "bash downimage.sh &"

done

提示:如上仅需Master01节点操作,从而实现所有节点自动拉取镜像。[root@master01 ~]# docker images #确认验证

[root@master01 ~]# kubeadm init --config=kubeadm-config.yaml --upload-certs

You can now join any number of the control-plane node running the following command on each as root:

kubeadm join 172.24.8.100:16443 --token xifg5c.3mvph3nwx1srdf7l

--discovery-token-ca-cert-hash sha256:031a8758ddad5431be4132ecd6445f33b17c2192c11e010209705816a4a53afd

--control-plane --certificate-key 560c926e508ed6011cd35fe120a5163d3ca32e16b745cf1877da970e3e0982f0

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

"kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.24.8.100:16443 --token xifg5c.3mvph3nwx1srdf7l

--discovery-token-ca-cert-hash sha256:031a8758ddad5431be4132ecd6445f33b17c2192c11e010209705816a4a53afd

注意:如上token具有默认24小时的有效期,token和hash值可通过如下方式获取:kubeadm token list如果 Token 过期以后,可以输入以下命令,生成新的 Token:

kubeadm token create

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

[root@master01 ~]# mkdir -p $HOME/.kube

[root@master01 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master01 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@master01 ~]# cat << EOF >> ~/.bashrc

export KUBECONFIG=$HOME/.kube/config

EOF #设置KUBECONFIG环境变量

[root@master01 ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

[root@master01 ~]# source ~/.bashrc

附加:初始化过程大致步骤如下:

· [kubelet-start] 生成kubelet的配置文件"/var/lib/kubelet/config.yaml"

· [certificates]生成相关的各种证书

· [kubeconfig]生成相关的kubeconfig文件

· [bootstraptoken]生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到

提示:初始化仅需要在master01上执行,若初始化异常可通过kubeadm reset && rm -rf $HOME/.kube重置。

[root@master02 ~]# kubeadm join 172.24.8.100:16443 --token xifg5c.3mvph3nwx1srdf7l

--discovery-token-ca-cert-hash sha256:031a8758ddad5431be4132ecd6445f33b17c2192c11e010209705816a4a53afd

--control-plane --certificate-key 560c926e508ed6011cd35fe120a5163d3ca32e16b745cf1877da970e3e0982f0

[root@master02 ~]# mkdir -p $HOME/.kube

[root@master02 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master02 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@master02 ~]# cat << EOF >> ~/.bashrc

export KUBECONFIG=$HOME/.kube/config

EOF #设置KUBECONFIG环境变量

[root@master02 ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

[root@master02 ~]# source ~/.bashrc

提示:master03也如上执行添加至集群的controlplane。提示:若添加异常可通过kubeadm reset && rm -rf $HOME/.kube重置。

回到顶部

· Calico 是一个安全的 L3 网络和网络策略提供者。

· Canal 结合 Flannel 和 Calico, 提供网络和网络策略。

· Cilium 是一个 L3 网络和网络策略插件, 能够透明的实施 HTTP/API/L7 策略。 同时支持路由(routing)和叠加/封装( overlay/encapsulation)模式。

· Contiv 为多种用例提供可配置网络(使用 BGP 的原生 L3,使用 vxlan 的 overlay,经典 L2 和 Cisco-SDN/ACI)和丰富的策略框架。Contiv 项目完全开源。安装工具同时提供基于和不基于 kubeadm 的安装选项。

· Flannel 是一个可以用于 Kubernetes 的 overlay 网络提供者。

· Romana 是一个 pod 网络的层 3 解决方案,并且支持 NetworkPolicy API。Kubeadm add-on 安装细节可以在这里找到。

· Weave Net 提供了在网络分组两端参与工作的网络和网络策略,并且不需要额外的数据库。

· CNI-Genie 使 Kubernetes 无缝连接到一种 CNI 插件,例如:Flannel、Calico、Canal、Romana 或者 Weave。提示:本方案使用Calico插件。

[root@master01 ~]# kubectl taint nodes --all node-role.kubernetes.io/master- #允许master部署应用

提示:部署完内部应用后可使用kubectl taint node master01 node-role.kubernetes.io/master="":NoSchedule重新设置Master为Master Only 状态。

[root@master01 ~]# cat config/calico/calico.yaml #检查配置

……

- name: CALICO_IPV4POOL_CIDR

value: "10.10.0.0/16" #检查Pod网段

……

- name: IP_AUTODETECTION_METHOD

value: "interface=eth.*" #检查节点之间的网卡

# Auto-detect the BGP IP address.

- name: IP

value: "autodetect"

……

[root@master01 ~]# kubectl apply -f config/calico/calico.yaml

[root@master01 ~]# kubectl get pods --all-namespaces -o wide #查看部署

[root@master01 ~]# kubectl get nodes

[root@master01 ~]# vi /etc/kubernetes/manifests/kube-apiserver.yaml

……

- --service-node-port-range=1-65535

……

提示:如上仅需在所有Master节点操作。

回到顶部

[root@master01 ~]# for node_ip in ${NODE_IPS[@]}

do

echo ">>> ${node_ip}"

ssh root@${node_ip} "kubeadm join 172.24.8.100:16443 --token xifg5c.3mvph3nwx1srdf7l

--discovery-token-ca-cert-hash sha256:031a8758ddad5431be4132ecd6445f33b17c2192c11e010209705816a4a53afd"

ssh root@${node_ip} "systemctl enable kubelet.service"

done

提示:如上仅需Master01节点操作,从而实现所有Worker节点添加至集群,若添加异常可通过如下方式重置:

[root@node01 ~]# kubeadm reset

[root@node01 ~]# ifconfig cni0 down

[root@node01 ~]# ip link delete cni0

[root@node01 ~]# ifconfig flannel.1 down

[root@node01 ~]# ip link delete flannel.1

[root@node01 ~]# rm -rf /var/lib/cni/

[root@master01 ~]# kubectl get nodes #节点状态

[root@master01 ~]# kubectl get cs #组件状态

[root@master01 ~]# kubectl get serviceaccount #服务账户

[root@master01 ~]# kubectl cluster-info #集群信息

[root@master01 ~]# kubectl get pod -n kube-system -o wide #所有服务状态

提示:更多Kubetcl使用参考:更多kubeadm使用参考:

回到顶部

Kubernetes的早期版本依靠Heapster来实现完整的性能数据采集和监控功能,Kubernetes从1.8版本开始,性能数据开始以Metrics API的方式提供标准化接口,并且从1.10版本开始将Heapster替换为Metrics Server。在Kubernetes新的监控体系中,Metrics Server用于提供核心指标(Core Metrics),包括Node、Pod的CPU和内存使用指标。对其他自定义指标(Custom Metrics)的监控则由Prometheus等组件来完成。

有关聚合层知识参考:kubeadm方式部署默认已开启。

[root@master01 ~]# mkdir metrics

[root@master01 ~]# cd metrics/

[root@master01 metrics]# wget https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.3.6/components.yaml

[root@master01 metrics]# vi components.yaml

……

apiVersion: apps/v1

kind: Deployment

……

spec:

replicas: 3 #根据集群规模调整副本数

……

spec:

hostNetwork: true

……

- name: metrics-server

image: k8s.gcr.io/metrics-server-amd64:v0.3.6

imagePullPolicy: IfNotPresent

args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-insecure-tls

- --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP #追加此args

……

[root@master01 metrics]# kubectl apply -f components.yaml

[root@master01 metrics]# kubectl -n kube-system get pods -l k8s-app=metrics-server

NAME READY STATUS RESTARTS AGE

metrics-server-7b97647899-8txt4 1/1 Running 0 53s

metrics-server-7b97647899-btdwp 1/1 Running 0 53s

metrics-server-7b97647899-kbr8b 1/1 Running 0 53s

[root@k8smaster01 ~]# kubectl top nodes

[root@master01 metrics]# kubectl top pods --all-namespaces

提示:Metrics Server提供的数据也可以供HPA控制器使用,以实现基于CPU使用率或内存使用值的Pod自动扩缩容功能。部署参考:有关metrics更多部署参考:开启开启API Aggregation参考:API Aggregation介绍参考:

回到顶部

参考

回到顶部

[root@master01 ~]# kubectl label nodes master01 dashboard=yes

[root@master01 ~]# kubectl label nodes master02 dashboard=yes

[root@master01 ~]# kubectl label nodes master03 dashboard=yes

本实验已获取免费一年的证书,免费证书获取可参考:。

[root@master01 ~]# mkdir -p /root/dashboard/certs

[root@master01 ~]# cd /root/dashboard/certs

[root@master01 certs]# mv k8s.odocker.com tls.crt

[root@master01 certs]# mv k8s.odocker.com tls.crt

[root@master01 certs]# ll

total 8.0K

-rw-r--r-- 1 root root 1.9K Jun 8 11:46 tls.crt

-rw-r--r-- 1 root root 1.7K Jun 8 11:46 tls.ke

提示:也可手动如下操作创建自签证书:[root@master01 ~]# openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout tls.key -out tls.crt -subj "/C=CN/ST=ZheJiang/L=HangZhou/O=Xianghy/OU=Xianghy/CN=k8s.odocker.com"

[root@master01 ~]# kubectl create ns kubernetes-dashboard #v2版本dashboard独立ns

[root@master01 ~]# kubectl create secret generic kubernetes-dashboard-certs --from-file=$HOME/dashboard/certs/ -n kubernetes-dashboard

[root@master01 ~]# kubectl get secret kubernetes-dashboard-certs -n kubernetes-dashboard -o yaml #查看新证书

NAME TYPE DATA AGE

kubernetes-dashboard-certs Opaque 2 4s

[root@master01 ~]# cd /root/dashboard

[root@master01 dashboard]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.1/aio/deploy/recommended.yaml

[root@master01 dashboard]# vi recommended.yaml

……

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort #新增

ports:

- port: 443

targetPort: 8443

nodePort: 30001 #新增

selector:

k8s-app: kubernetes-dashboard

---

…… #如下全部注释

#apiVersion: v1

#kind: Secret

#metadata:

# labels:

# k8s-app: kubernetes-dashboard

# name: kubernetes-dashboard-certs

# namespace: kubernetes-dashboard

#type: Opaque

……

kind: Deployment

……

replicas: 3 #适当调整为3副本

……

imagePullPolicy: IfNotPresent #修改镜像下载策略

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

- --tls-key-file=tls.key

- --tls-cert-file=tls.crt

- --token-ttl=3600 #追加如上args

……

nodeSelector:

"beta.kubernetes.io/os": linux

"dashboard": "yes" #部署在master节点

……

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

type: NodePort #新增

ports:

- port: 8000

nodePort: 30000 #新增

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

……

replicas: 3 #适当调整为3副本

……

nodeSelector:

"beta.kubernetes.io/os": linux

"dashboard": "yes" #部署在master节点

……

[root@master01 dashboard]# kubectl apply -f recommended.yaml

[root@master01 dashboard]# kubectl get deployment kubernetes-dashboard -n kubernetes-dashboard

[root@master01 dashboard]# kubectl get services -n kubernetes-dashboard

[root@master01 dashboard]# kubectl get pods -o wide -n kubernetes-dashboard

提示:master01 NodePort 30001/TCP映射到 dashboard pod 443 端口。

提示:dashboard v2版本默认没有创建具有管理员权限的账户,可如下操作创建。[root@master01 dashboard]# vi dashboard-admin.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

[root@master01 dashboard]# kubectl apply -f dashboard-admin.yaml

回到顶部

[root@master01 ~]# cd /root/dashboard/certs

[root@master01 certs]# kubectl -n kubernetes-dashboard create secret tls kubernetes-dashboard-tls --cert=tls.crt --key=tls.key

[root@master01 certs]# kubectl -n kubernetes-dashboard describe secrets kubernetes-dashboard-tls

[root@master01 ~]# cd /root/dashboard/

[root@master01 dashboard]# vi dashboard-ingress.yaml

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: kubernetes-dashboard-ingress

namespace: kubernetes-dashboard

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/use-regex: "true"

nginx.ingress.kubernetes.io/ssl-passthrough: "true"

nginx.ingress.kubernetes.io/rewrite-target: /

nginx.ingress.kubernetes.io/ssl-redirect: "true"

#nginx.ingress.kubernetes.io/secure-backends: "true"

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

nginx.ingress.kubernetes.io/proxy-connect-timeout: "600"

nginx.ingress.kubernetes.io/proxy-read-timeout: "600"

nginx.ingress.kubernetes.io/proxy-send-timeout: "600"

nginx.ingress.kubernetes.io/configuration-snippet: |

proxy_ssl_session_reuse off;

spec:

rules:

- host: k8s.odocker.com

http:

paths:

- path: /

backend:

serviceName: kubernetes-dashboard

servicePort: 443

tls:

- hosts:

- k8s.odocker.com

secretName: kubernetes-dashboard-tls

[root@master01 dashboard]# kubectl apply -f dashboard-ingress.yaml

[root@master01 dashboard]# kubectl -n kubernetes-dashboard get ingress

回到顶部

将k8s.odocker.com导入浏览器,并设置为信任,导入操作略。

使用token相对复杂,可将token添加至kubeconfig文件中,使用KubeConfig文件访问dashboard。

[root@master01 dashboard]# ADMIN_SECRET=$(kubectl -n kubernetes-dashboard get secret | grep admin-user | awk '{print $1}')

[root@master01 dashboard]# DASHBOARD_LOGIN_TOKEN=$(kubectl describe secret -n kubernetes-dashboard ${ADMIN_SECRET} | grep -E '^token' | awk '{print $2}')

[root@master01 dashboard]# kubectl config set-cluster kubernetes

--certificate-authority=/etc/kubernetes/pki/ca.crt

--embed-certs=true

--server=172.24.8.100:16443

--kubeconfig=local-hakek8s-dashboard-admin.kubeconfig # 设置集群参数

[root@master01 dashboard]# kubectl config set-credentials dashboard_user

--token=${DASHBOARD_LOGIN_TOKEN}

--kubeconfig=local-hakek8s-dashboard-admin.kubeconfig # 设置客户端认证参数,使用上面创建的 Token

[root@master01 dashboard]# kubectl config set-context default

--cluster=kubernetes

--user=dashboard_user

--kubeconfig=local-hakek8s-dashboard-admin.kubeconfig # 设置上下文参数

[root@master01 dashboard]# kubectl config use-context default --kubeconfig=local-hakek8s-dashboard-admin.kubeconfig # 设置默认上下文

本实验采用ingress所暴露的域名: 方式访问。使用local-hakek8s-dashboard-admin.kubeconfig文件访问:

提示:更多dashboard访问方式及认证可参考 《》。dashboard登录整个流程可参考:

回到顶部

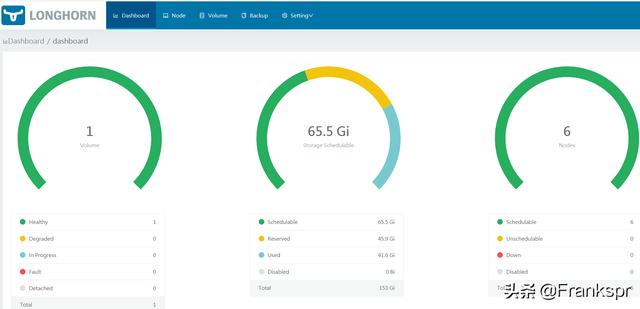

Longhorn是用于Kubernetes的开源分布式块存储系统。提示:更多介绍参考:。

[root@master01 ~]# source environment.sh

[root@master01 ~]# for all_ip in ${ALL_IPS[@]}

do

echo ">>> ${all_ip}"

ssh root@${all_ip} "yum -y install iscsi-initiator-utils &"

done

提示:所有节点都需要安装。

[root@master01 ~]# mkdir longhorn

[root@master01 ~]# cd longhorn/

[root@master01 longhorn]# wget

https://raw.githubusercontent.com/longhorn/longhorn/master/deploy/longhorn.yaml

[root@master01 longhorn]# vi longhorn.yaml

#……

---

kind: Service

apiVersion: v1

metadata:

labels:

app: longhorn-ui

name: longhorn-frontend

namespace: longhorn-system

spec:

type: NodePort #修改为nodeport

selector:

app: longhorn-ui

ports:

- port: 80

targetPort: 8000

nodePort: 30002

---

……

kind: DaemonSet

……

imagePullPolicy: IfNotPresent

……

#……

[root@master01 longhorn]# kubectl apply -f longhorn.yaml

[root@master01 longhorn]# kubectl -n longhorn-system get pods -o wide

提示:若部署异常可删除重建,若出现无法删除namespace,可通过如下操作进行删除:wget rm -rf /var/lib/longhorn/kubectl apply -f uninstall.yamlkubectl delete -f longhorn.yaml

提示:默认longhorn部署完成已创建一个sc,也可通过如下手动编写yaml创建。

[root@master01 longhorn]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

……

longhorn driver.longhorn.io Delete Immediate true 15m

[root@master01 longhorn]# vi longhornsc.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: longhornsc

provisioner: rancher.io/longhorn

parameters:

numberOfReplicas: "3"

staleReplicaTimeout: "30"

fromBackup: ""

[root@master01 longhorn]# kubectl create -f longhornsc.yaml

[root@master01 longhorn]# vi longhornpod.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: longhorn-pvc

spec:

accessModes:

- ReadWriteOnce

storageClassName: longhorn

resources:

requests:

storage: 2Gi

---

apiVersion: v1

kind: Pod

metadata:

name: longhorn-pod

namespace: default

spec:

containers:

- name: volume-test

image: nginx:stable-alpine

imagePullPolicy: IfNotPresent

volumeMounts:

- name: volv

mountPath: /data

ports:

- containerPort: 80

volumes:

- name: volv

persistentVolumeClaim:

claimName: longhorn-pvc

[root@master01 longhorn]# kubectl apply -f longhornpod.yaml

[root@master01 longhorn]# kubectl get pods

[root@master01 longhorn]# kubectl get pvc

[root@master01 longhorn]# kubectl get pv

[root@master01 longhorn]# yum -y install httpd-tools

[root@master01 longhorn]# htpasswd -c auth xhy #创建用户名和密码

提示:也可通过如下命令创建:USER=xhy; PASSword=x120952576; echo "${USER}:$(openssl passwd -stdin -apr1 <<< ${PASSWORD})" >> auth

[root@master01 longhorn]# kubectl -n longhorn-system create secret generic longhorn-basic-auth --from-file=auth [root@master01 longhorn]# vi longhorn-ingress.yaml #创建ingress规则

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: longhorn-ingress

namespace: longhorn-system

annotations:

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: longhorn-basic-auth

nginx.ingress.kubernetes.io/auth-realm: 'Authentication Required '

spec:

rules:

- host: longhorn.odocker.com

http:

paths:

- path: /

backend:

serviceName: longhorn-frontend

servicePort: 80

[root@master01 longhorn]# kubectl apply -f longhorn-ingress.yaml

浏览器访问:longhorn.odocker.com,并输入账号和密码。

回到顶部

参考。

作者:

出处:

关于作者:云计算、虚拟化,Linux,多多交流!

本文版权归作者所有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出!如有其他问题,可邮件(xhy@itzgr.com)咨询。

原文链接https://www.cnblogs.com/itzgr/p/13139247.html